How Taika Waititi Shoots A Film At 3 Budget Levels

In this video I’ll take a look at just three feature films that he has directed at three increasing budget levels to analyse the techniques that he uses to make them.

INTRODUCTION

If there’s one word that sums up Taika Waititi’s approach to directing it’s tone. His movies are entertaining, uplifting and lean into an unforced comedic tone with a large focus on the writing, casting and performances of the actors.

With a career in film that has involved years of work in commercials, music videos and TV series, in this video I’ll take a look at just three feature films that he has directed at three increasing budget levels to analyse the techniques that he uses to make them.

HUNT FOR THE WILDERPEOPLE - $2.5 MILLION

His love for comedy began early when he formed a duo with Jermaine Clement - who he’d later work with on other projects. He also started making short films. One of them, Two Cars, One Night earned him an Academy Award nomination.

Around this time he read Wild Pork and Watercress and decided he would try to write a screenplay adaptation of the book.

“I wrote the first draft of this in 2005. I hadn’t made any other features before then and I found it really difficult adapting the book because I’d never adapted anything and I thought you needed to be super true to the material. Basically lift everything from the book and put it into a movie. I put that to the side to concentrate on some other stuff and went off and made three other features. Then coming back to the material I realised, ‘Oh you don’t have to do that at all, you can just do whatever you want’. You put it through your filter, you know.”

This idea of putting a screenplay or an idea through his own filter is a consistent feature of his work: whether he’s writing his own original idea, working with a screenwriting collaborator or bringing a massive blockbuster script to the screen. But we’ll get to that later.

He takes a screenplay and applies his filter for comedy and adventure to arrive at an end product which has his recognisable authorship. This filter comes from a combination of the writing process, his approach to directing actors, and how he and his creative team visually tell the story.

“I chose the tone that I wanted as well. I decided I was going to make a comedy that was like an adventure film. I sort of chose stylistically and tonally what I wanted to do and then took the parts of the book that I felt would work in the film I wanted to make and then made up the rest.”

With the final screenplay in place and a budget of approximately $2.5 million, half of which came from the New Zealand Film Commision, he moved to the next step in the process - which is of particular importance to a director who has a large appreciation for performance - casting the actors.

This involved casting and directing a child actor to play the role of Ricky Baker. Directing children can be a challenge. Acting, of course, takes years of practice in manipulating your emotions in a controlled way.

The level of control and consistency required is difficult for most children. However,if you find the right child that is able to lock into the character, their performance may have a purity to it that might surpass their adult counterparts as it is more natural and less constructed.

“What the trick is when you are auditioning you search for the kid that resembles the character the most in personality. So, you never try and get a kid to pretend they are someone else. You choose the Ricky Baker’s of the world and find the one that is closest to what you want in the film. And then all they have to do is remember the lines.”

With the cast in place and enough funding to shoot for a brief 25 days, Waititi brought Australian cinematographer Lachlan Milne onto the project to shoot the film.

They decided on a single camera approach for most of the movie and rented an Arri Alexa XT with, based on some behind the scenes pictures, what looks like Cooke S4s and an Angenieux 12:1 zoom.

For the car chase scene, which they shot over a couple of days, they used five different cameras to get enough coverage on the relatively low budget: three Alexa XTs which shot the on the ground footage and two Red Epics, with the Angenieux 24-290mm mounted on a Shotover on a helicopter.

To prepare, the DP used a DSLR camera to shoot different angles of a model car which could then be cut into a sort of animatic or storyboard so that they had a list of the shots they needed to get on the day.

Since most scenes take place outdoors, lighting continuity was always going to be tricky. Milne always tried to orientate day exteriors so that the actors were backlit by the sun.

He also leaned into a natural sunlight look and didn’t use any diffusion scrims over the actors to soften the light. He didn’t want perfectly soft light that would be too pretty.

Also, placing scrims overhead limits the movement of the actors and how wide the shot can be. The frame needs to be fairly fixed otherwise the legs of the stands will start getting into the shot.

The director wanted to draw on the visual style of films from the mid 80s, such as films by Peter Weir, which didn’t have visual effects and didn’t use fancy gear like Technocranes to move the camera. Therefore they used the 24-290mm zoom to punch into shots rather than using the more expensive, impractical and slicker camera motion.

Something about the slow zooms also effectively built up tension in scenes and, when combined with other wider shots, helped land some of the comedic gags. Another way he accentuates comedy is with the music and sound, and lingering on wider shots and not cutting too quickly.

Overall, he used the relatively low two and a half million dollar budget to produce a bigger looking movie which mainly had contained scenes with one large chase scene set piece, with a large focus on casting and performances, almost no CG work, and an experienced crew which moved quickly with a single camera to pull off the entire movie on a tight five week schedule.

JOJO RABBIT - $14 MILLION

“There was no real pitching process for this. So I didn’t go to studios and say ‘Hey, this is my idea for a film’. I realised early on it’s a really hard film to pitch. No one really wants to hear a pitch like this, so I’m going to write a script that’s really good and I’m going to let that be the pitch.”

A screenplay looking at World War Two through the eyes of a young boy in the Hitler Youth, where an imaginary friend version of Hitler plays a supporting role, is certainly a bit of an odd pitch.

But, after sending the completed script around, Searchlight took an interest in the project and agreed to make the film on one condition, that Waititi play Hitler. Like Hunt for the Wilderpeople, Jojo Rabbit was also an adaptation from a novel, Caging Skies.

His screenplay and vision for the film took a different approach to how most World War Two films are presented and once again drew from his own tonal sensibilities towards comedic entertainment that is uplifting.

“We can’t get complacent and keep making the same style, the same tonal style of film: it’s drama, it’s depressing…everything is desaturated and browns and greys. Crazy idea, we can also maybe create something that is colourful and bright and has humour in it. I knew the tone really early on.”

With a budget of $14 million from Fox Searchlight and TSG Entertainment they tried to find a base for production that would give them the locations they needed and the most bang for their buck.

Initially, the plan was to shoot in Germany, however since their laws meant that child actors could only work for around three hours per day, and the movie was filled with child actors, this would have almost doubled the amount of shooting days they needed.

Eventually they decided on the Czeq Republic which had buildings that came ready made to look like they belonged in the World War Two era, a reliable film industry and labour laws which allowed them to schedule the shoot into 40 days of filming.

From the budget $800,000 was given to the art department, which may sound like a lot, but is actually very low to purchase all the army equipment and create the sets for a period film. So, having town locations which were already almost good to go helped create the period world on the low budget.

Mihai Mălaimare Jr. was brought on board as the cinematographer on the film. Prior to shooting, the director and the DP collaborated to devise the format that was right for the project.

“We were both really attracted to 1.33, but the audience is not as used to that aspect ratio anymore. We were trying to work out how it would work for us framing wise and realising how much more top and bottom it would reveal in that aspect ratio. That was the only thing that made us try the 1.85:1. One thing that Taika really responded to and I wanted to try for so long was anamorphic 1.85.” - Mihai Mălaimare Jr.

To get this squarer aspect ratio with anamorphic lenses he used an unusual technique. Hawk 1.3x anamorphic lenses are designed to be shot with a 16:9 size sensor and get a 2.40:1 aspect ratio. However, if you shoot these lenses with a 4:3 sensor size, de-squeeze them 1.3x and then crop just a tad you can get a 1.85:1 aspect ratio that maintains an anamorphic look. Shooting the 1.3x V-Lites on a 4:3 sensor on an Alexa XT gave him the best of both worlds: the squarer aspect ratio along with anamorphic falloff, without needing to do much cropping.

To portray a brighter version of reality, through the eyes of a child, they used a bright colour palette with lots of vibrant greens, blues, yellows and of course reds. They also used more whimsical slow motion and central, front-on, symmetrical compositions, which placed characters in the middle of the frame and used natural framing devices on the set such as doors, picture frames, tables or tiling for balance.

Much of the tonal balance was adjusted in the edit. Whereas some directors may despise test screenings - showing a cut of the film to an audience prior to release - Waititi likes to use them in order to gauge the effectiveness of the pacing of different versions of the edit.

“It was more the tonal balance. So I test my films all the time with audiences. So you get feedback. What do you think of this? Were you bored here? Were you overstimulated here? Was it too funny here? Was it too sad here? And then just finding a balance.”

Jojo Rabbit was produced on a higher $14 million budget that accommodated for more shoot days, a war set piece, lots of extras, some star performers, and period correct production design.

THOR: RAGNAROK - $180 MILLION

“I have a theory that there are periods when the economy is suffering and people and people don’t have a lot of money to spend, they don’t want to go and see films about how tough life is for people. I think the reason that a lot of those dramatic films are not doing well is because people want an escape, which is why a lot of the superhero films are doing really well.”

This movie involved a step up from a fairly regular budget to what I guess you could best call a Marvel budget.

To get the job Waititi pitched his idea of the film, which involved creating a ‘sizzle reel’ - basically a montage that he cut to Immigrant Song by Led Zeppelin using footage from other films. The studio were also enthused by his idea to bring a vitality to the movie and his trademark brand of humour to the characters.

Working on a MCU movie means that the director basically has whatever technical resources they can dream of, have as much time as they need (in this case 85 shoot days, or a full two years when including pre and post production) and can use the massive budget to hire pretty much whatever actors they want.

However it also means that most of the look of the film will be constructed after shooting with CGI and to a large degree will be controlled by the studio. You know, the desaturated, GC-laden feeling all Marvel movies have.

What falls on the director therefore is not so much creating the aesthetic style, but rather managing the project and creating the overall tone by using performances and storytelling.

To wring more of a comedically authentic tone from the script, he worked with the actors to achieve a more natural delivery of lines.

“The thing about a lot of studio films and Hollywood films especially is that when you hear a joke in these films you get the feeling that the joke was written about a year before they shot it and then a couple of people in the board room were like, ‘and then he’s gonna say….’ and they’re like ‘that’s gonna be amazing when we shoot that in a year.”

Instead they worked with a script that had suggested dialogue and jokes. Once him and the actors were on set they could then work with that material until they found what delivery worked naturally. Not being so tightly constrained to the original shooting script.

The film was shot by Javier Aguirresarobe, who has a long career working on a range of both low and high budget movies. About 95% of the shoot was done with bluescreens.

This meant that the DP lit the actors as a way to suggest to the post production supervisor where, with what intensity, quality and colour temperature they imagine the light to be. CGI is then used to construct the rest of the world and the light that is in it. Motion capture suits were used to capture the movement of computer generated characters.

The film was largely shot on the large format Alexa 65 with Arri Prime 65 and Zeiss Vintage 765 lenses. The Phantom Flex 4K was also used for shots which needed slow motion.

Thor: Ragnarok therefore used its enormous budget to hire a cast of famous actors, fund a very lengthy 85 shooting days with all the gear they could imagine, loads of action scenes, and effectively pay for two years of production time that included expensive CGI work in almost every shot in the entire movie, all the while Waititi maintained his grasp of a perfect comedic, adventure tone.

5 Steps To Shooting A Documentary

Let's unpack five steps that you can take whenever you get the opportunity to work on a new documentary project.

INTRODUCTION

The world of documentary is one that is fundamentally different from other forms of planned fiction filmmaking, like music videos, commercials and features. What differentiates these disciplines is that one is more pre-planned and structured ahead of shooting, while documentaries rely on a broader plan with inevitably less precision.

This means that documentary cinematographers need to always be on their toes and be quick to adapt to unexpected situations as they unfold. Having said that, this doesn’t mean that you should just go in with a camera and rely purely on luck and instinct. There are some clearly defined ways that we as filmmakers can use to shoot more consistent, stronger content.

So, I thought I’d use this video to unpack five steps that I take whenever I get an opportunity to work on a new documentary project.

1- IDENTIFY THE CONTENT

Gone are the days where most documentaries meant setting up a few sit down interviews which would then get cut with archival footage.

Today documentary, or documenting a version of reality, comes in many forms - from commercial, branded content that uses touches of non-fiction, to purely observational filmmaking, re-creations of events, nature documentaries, traditional talking head documentaries and everything in between.

It may seem obvious, but as a cinematographer, the first thing you need to do when starting a new project is clearly define the form of the film and identify the types of scenarios that you will be filming.

The reason this is so important is twofold, it’ll help you to identify the gear you need to bring along and will help you to nail down a visual style. But we’ll go over those two points separately.

When you’re dealing with real life situations, planning and having a clear vision for what you need to get will make it far easier to execute on the day. Half of making documentaries comes from producing and putting yourself in the right situation to capture whatever the action is.

Most of these decisions come from the director. In documentary work, the director may also be the cinematographer. If there is a dedicated cinematographer then knowing the form of the project and the kind of footage needed is still crucial.

For example, you may go into a shoot knowing that you need an interview with the main character that should be prioritised, some B-roll footage of the location and one vérité scene with another character.

If the schedule of the main character changes and they suddenly aren’t available to do an interview in the morning anymore then you know that: first priority is scheduling the main interview, second priority is finding time with a secondary character, or looking for a potential scene to present itself and thirdly the B-roll can be gathered throughout the day in the gaps of the schedule.

Making a list that prioritises footage that is a must have, footage that would be nice to have and footage that would be a bonus to get is useful going into the shoot. It’s always easier to improvise and get unexpected, magical moments when you already have a solid base or plan to work from that tells the core of the story.

Once you’ve put the edges of the puzzle in place, it’s much easier to then fill in the rest.

2 - GEAR

As I mentioned, selecting the gear needed for a project will be determined by the kind of scenarios that need to be captured.

For example, a verite documentary may be captured by a single handheld camera, with a single lens, which also records sound, operated by one person. While larger budget true crime documentaries with re-creation scenes may have an entire crew, complete with a cinema camera package, a lighting package, and a dolly.

Whatever gear is needed on a documentary shoot there is always one certainty: you need to be able to work fast. For that reason, you need to have a high degree of familiarity with the camera you are shooting on. If you need to quickly capture a moment in slow motion can you find the setting within a few seconds? Or if the light suddenly changes and you need to compensate for overexposure can you quickly adjust the ND filter?

This is why going into a shoot I’d recommend configuring the camera in such a way that you are able to make changes as quickly as possible. This may be through user buttons, through having a variable ND filter on the front of the lens, or by having a zoom that can use to quickly punch in or out to a specific shot size. When you’re capturing real life, you don’t ever want to miss a crucial moment if it can be avoided.

Having less gear also speeds things up. It means less to set up, carry around and to pack away. There’s a sweet spot between having the tools that you need and not having too much stuff to lug around.

Although there are loads of different approaches to selecting gear, let me go over what is a fairly typical setup.

Starting with the camera, a popular choice is something like a Sony FS7, a Canon C300 or something newer like the FX6. These cameras have great codecs that produce high quality images with a relatively small file size - which you need on documentary projects where you often need to shoot a lot of footage. They also come with XLR audio inputs to feed sound directly into the camera and have user buttons and internal ND filters for quick operation.

When it comes to lenses, I personally prefer working with primes, but zooms are probably more popular as they allow you to quickly readjust shot sizes. Something like a 24-70mm is a pretty standard choice. Depending on the content it’s usually useful to also carry a long zoom like a 70-200mm.

I like to carry screw-on filters with me, such as a variable ND and maybe a diffusion filter or a diopter filter, depending on the look.

Then you want a lightweight tripod with a fluid head that is smooth to operate, but light enough to carry around all day and to quickly set up. Many people now like to shoot with a gimbal too.

I also like to carry around a little lighting bag and a stand. This can be used for an on-the-fly interview, bringing up the exposure in a dark space or lighting observational scenes so that they are more ‘cinematic’.

I exclusively choose LEDs that are both dimmable and are bi-colour. This means you can easily change their colour temperature and the intensity of the light with the turn of a knob. Again, speed is key.

3 - VISUAL STYLE

Whether you are conscious of it or not, every decision that a cinematographer makes while shooting contributes to some kind of visual style. Even the act of just picking up a camera quickly and pressing record to capture a moment creates a visual style with a loose, handheld, verite look.

This visual style may affect the audience in a subtly different way than if the same scene was shot locked off on a tripod, or shot with lots of movement on a gliding gimbal.

There are a million different directions to go in. Maybe you decide on a specific type of framing for the interviews, maybe the entire film is handheld, maybe you only use natural light, maybe you use artificial light to enhance reality, maybe you use a drone to give context to the space, maybe you suspend time by using slow motion, or shoot with a diffusion filter to make the images more dreamy. These are all decisions that influence a film’s visual style.

Therefore the next step in documentary cinematography, before arriving on set, is coming up with an idea for an overarching visual style that supports the film. This style could be rationally decided upon based on thought or based on what feels right.

This step also needs to be considered with the first step of identifying the kind of content you are shooting. You need to find a style that is balanced with what you can realistically achieve. For example if you’re shooting a fast paced fly on the wall documentary it might not be possible to shoot everything from a tripod with perfect lighting.

Usually, I find I have a stronger connection to films that have some kind of visual cohesion and an artistic vision that stretches across the entire doccie.

Of course since we are shooting in unpredictable situations, with less control over the environment, it’ll almost never be possible to get exactly what we want visually.

But, going in with a plan or an idea of the look, or finding the look as you begin shooting, will almost always result in stronger images than if you go into shooting with no vision or ideas at all and just get whatever you can get without giving any thought to how the images look and the feeling they will convey.

4 - SOUND

Next, let’s talk about something that is sometimes loathed by cinematographers, but which is as important, if not more important, than the image: sound.

Some documentaries may have the resources and the need to hire a dedicated sound person, but often in the field of documentary the job of recording sound may fall on the cinematographer.

Therefore it’s important to at least know the basics of how to record sound. There are two ways this is done. With lav mics that are clipped onto the subject, which then feeds a signal wireless to a receiver which is plugged into the camera or a recorder that captures the sound. Or with a boom mic that can either be mounted on-board the camera, or used by a boom operator on a boom pole. For more on this I made another video on boom operators.

The main point to be aware of as a cinematographer, is that getting good sound may involve compromise. For example, you may want to shoot a beautiful wide shot of a scene, or an interview, but if you are shooting in a noisy, uncontrolled environment you may be forced to scrap that plan and shoot everything in a close up so that you can get the boom mic nice and close to the subject.

It may be frustrating to sacrifice the better shot for the sake of sound, trust me I hate it, but what I always tell myself is that it’s better to get a worse shot that has usable sound, than to get a beautiful shot that has terrible sound.

If you get a beautiful shot but the sound isn’t usable it’ll just end up on the cutting room floor anyway, never seen by anyone besides the editor.

Of course this is dependent on how necessary the sound is, but as a general rule if you’re working with an on-board mic and there is crucial dialogue - prioritise getting usable sound over getting a beautiful image.

5 - COVERAGE

The final step to shooting a documentary is, well, the actual act of shooting it. Understanding coverage, which refers to the angles, shot sizes and way in which a scene is shot is an invaluable skill in documentaries.

While in fiction filmmaking you can shot list, storyboard or consider the coverage of a scene between setups as you shoot it, when you are working in unexpected situations that will only take place once, you have to make these decisions in real time.

It’s a difficult thing to give broad advice on as different scenes can unfold in different ways, but let’s go over some basic ideas for capturing an average vérité scene.

I find it’s useful to edit scenes in your head as you are shooting them. For an average dialogue scene you know you’ll need a few things. One, you’ll need a wider shot that introduces the audience to the space of the location so that they can orient themselves and understand the context. Two, you’ll need a shot of whoever is talking, specifically the main character or characters that you are focusing on. Three, you’ll need to get reaction shots of whoever isn’t talking, so that the editor can use these to shorten a scene.

For example, there may be one sentence at the beginning which is great, then they waffle for a bit, then they have another three sentences which are great. If you have a reaction shot, then you can start on the person saying the first sentence, cut to the reaction shot while you keep going with the dialogue from the end three sentences. Then cut back to the person saying the dialogue. This naturally smooths things over and ‘hides’ a cut.

If you only have shots of whoever is talking, then the editor will have no option but to either select one section of dialogue, for example the final three sentences, or to jump cut - which can be abrasive.

Also remember that the size of a shot affects how an image is interpreted. So for more personal moments you want to try and get as close as you can. However, you also need to take into consideration that your proximity to a person will affect how they act.

If you meet someone for the first time and get right up in their face with a camera immediately they will be put off and likely won’t open up to you emotionally.

That’s why I usually like to start shooting scenes wider and then begin to move closer to them as they become more comfortable with your presence and the conversation starts to heat up.

Covering a scene in a documentary situation comes with experience. It’s like an improvisational dance that needs to balance getting shots that will cut together, making the subject feel natural and at ease and anticipating the right shot size for the right moment.

Although this just touches the surface, if you want to cut a basic, verite dialogue scene together and make an editor happy, then make sure you get, at a minimum, a shot that establishes the space, a shot of the person talking and a reaction shot of people who are not talking.

Cinematography Style: Janusz Kamiński

In this episode of Cinematography Style I’ll unpack Kaminski’s philosophy on filmmaking that uses visual metaphors to express stories, and give examples of the kinds of gear and technical tricks he’s used as a cinematographer to create images.

INTRODUCTION

With a career in producing images that has spanned decades, it can be tricky to pin down exactly the work of Janusz Kaminski. However, it’s difficult to deny that a large part of his filmography is due to his extensive collaborations with iconic director Steven Spielberg.

This raises the question, how do you separate the creative input of the director and the cinematographer? Is it even possible to do so?

In this episode of Cinematography Style I’ll unpack Kaminski’s philosophy on filmmaking that uses visual metaphors to express stories, and give examples of the kinds of gear and technical tricks he’s used as a cinematographer to create images.

BACKGROUND

During a period of political turbulence in the early 1980s, the Polish cinematographer moved to the United States where he attended university. He decided to take up cinematography and went to film school at the AFI.

He got his first professional job in the industry as a dolly grip on a commercial. The camera operator quickly told him this wasn’t for him. Next, he worked as a camera assistant, where he was again told he also wasn’t any good. He then started working in lighting which kicked off his career.

During this time he worked on lower budget productions with fellow up and coming cinematographers such as Phedon Papamichael and Wally Pfister. He also began working as a cinematographer too in his own right.

“I was here for 13 years and I shot 6, 7 movies. So I was experienced I just didn’t have that little push. I shot a little movie directed by Diane Keaton. Steven liked the work, called my agent, we met and he offered me to do a television movie for his company and after that he offered me Schindler’s List.”

This collaboration proved to be a lasting one. Over the years they have shot 19 other films together and counting.

Other than Spielberg he’s also shot feature films in many different genres for other directors such as: Stephen Sommers, Cameron Crowe, Judd Apatow and David Dobkin.

PHILOSOPHY

Coming back to the question of how you separate Kaminiski’s input from Spielberg’s: in their vast collection of films together, a lot of the overarching visual decision making does come from the director’s side.

Prior to their work together, Spielberg was known for the creative way in which he positioned and moved the camera in order to tell stories. In that way, I think a great deal of the perspective of what the audience sees in the frame comes from him.

For some movies, such as West Side Story, Spielberg uses extensive storyboards to pre-plan the coverage in a very specific way. While other movies like Schindler’s List had surprisingly little planning and were more spontaneous without any shot lists or storyboards.

For this situation, Kaminski used a portable tape recorder to dictate notes about lighting, problems or gear he may need, to bring order to his thoughts and successfully execute the photography as they went.

In terms of the overall look and lighting of Spielberg’s early films, they all followed a similar template that was grounded by a traditionally beautiful Hollywood aesthetic with haze that accentuated an ever present glowing backlight that gave the actors an angelic, rim light halo outline.

The other cinematographers he worked with were intent on servicing this traditional aesthetic.

When Kaminski came on board to shoot Schindler’s List he deconstructed the Hollywood, family-friendly beauty that audience’s had come to expect from Speilberg’s work.

“I think the idea of de-glamorising the images, strangely, I’m always interested in that. I didn’t want that classical Hollywood light. I wanted more naturalistic looking. We all want to take chances, because it’s not this comfortable life we’ve chosen where we just make movies and we work with movie stars. We express ourselves artistically through our work and we want to take chances.”

Throughout their collaborations together, Kaminiski was able to find a middle ground that balanced Speilberg’s desire for a traditionally beautiful look with his own appreciation for de-glamorised images that could be considered beautiful in a different way.

Another ever present idea in his work is his use of visual metaphors - where the camerawork represents a particular idea or leans into a visual perspective that represents the location or time period that is being captured in the story.

“I think each story has its own representation. You have to allow the audience to immediately identify where they are. So if you’re not using some very strong metaphors you will lose the audience. So the first explosion is very yellow, then we go to France and it’s more blue-ish, you go to Italy it’s very warm and fuzzy, France it’s very warm and fuzzy. So using those visual cliches that we as the people identify with specific countries.”

He doesn’t only create these visual metaphors with colour. On Munich he used zooms to capture the photographic vocabulary of the 1970s when those lenses were popular.

Or in Saving Private Ryan he mimicked the kind of manic, handheld, on the ground style that the real combat cameramen of the time would have been forced to use.

Or in Catch Me If You Can, he differentiated the time periods by giving the 60s scenes a warm, romantic glow and the 1970s scenes a slightly bluer, flatter look.

These visual languages and cues subtly change depending on the movie. They back up each film by using the images to support the story in a way that hopefully goes unnoticed by the audience on the surface, but feeds into how they interpret the movie in an unconscious way.

GEAR

“I look at cameras as a sewing machine. When you talk to the wardrobe designer you don’t ask her what kind of sewing machine do you use, because it’s just a sewing machine. It doesn’t really matter. The equipment, all that stuff is not. What you do with it is essential.”

Some cinematographers like to be consistent with their gear selection to carry their visual trademark across the respective projects that they work on. Kaminiski isn’t like that.

Throughout his career he has got a variety of optical effects from his big bag of tricks. Sometimes this involves using filters, sometimes photochemical manipulation, other times unique grip rigs or playing with unconventional camera settings.

So, let’s go through a few examples of some gear he has used, starting with his camera package.

He flips between shooting with Panavision cameras and lenses in the US and using Arri cameras when working in Europe. He’s alternated between shooting Super 35 with spherical lenses and in the anamorphic format.

Spherical lenses are more practical as they are faster, have better close focus and are smaller, which makes them better suited for shooting in compact spaces such as car interiors. Examples of some of these lenses that he has used include Cooke S4s, Panavision Primos and Zeiss Standard and Super Speeds.

He usually shoots close ups at around a more romantic 50mm focal length or longer to flatter the face, but on Schindler’s List chose to shoot them with a wider 29mm field of view that lended itself to realism.

He’s used anamorphic lenses for their classical Hollywood look, with beautiful flares that are impossible to otherwise recreate. Some examples are the C-Series and more modern T-Series from Panavision.

He has used digital cinema cameras occasionally but almost exclusively shoots features on 35mm film - including his recent work. His choice of film stocks has been extremely varied.

On Schindler’s List he mainly shot on Eastman Double-X 5222 black and white. For specific sequences that required parts of the frame to be colourised, such as the famous shot of the girl in the red dress, he pulled an interesting photochemical trick by recording on Eastman EXR 500T 5296 colour negative film stock and then printing the film onto a special panchromatic high-con stock which is sensitive to all colours and used primarily for titles.

This gave them the look they wanted that best matched the rest of the black and white footage and didn’t contain the blue tint that came with removing the colour from the colour negative in the regular way.

To get a flatter image for the 1970s scenes in Catch Me If You Can he used Kodak 320T stock in combination with low-con and fog filters to purposefully make the images a bit uglier, more neutral and drab. This coincided with the main characters' fall from grace as he came to terms with the real life consequences of his actions.

Or on Saving Private Ryan, he settled on Eastman’s 200T film stock, which he pushed by one stop and used a film development process called ENR which both desaturated the stock and sharpened up the look of textures, giving the details in the image a grittiness.

When it comes to lighting with his gaffer he acknowledges that some gaffers are more technical while others are more conceptual. Due to the large scope of the kind of sets he lights it’s more practical for him to describe the lighting he wants in more general terms. Such as no backlight, or this source needs to feel warm - rather than describing and placing loads of specific units around a set.

“The scope is way too large. You can’t demand every light be placed on set according to your desires, so you have a gaffer who is knowledgeable. On the shooting day or the day before you talk about the specifics of each scene or you adjust the lighting. Or you do the lighting with the gaffer on the given day right after the rehearsal. Surround yourself with the best people so you can work less and I want to work as little as possible.”

Spielberg likes to move the camera in a fluid, expansive way, with rigs such as a Technocrane, that reveals large portions of the location. This adds to his challenge of lighting as it’s far easier to light in a single direction with a 15 degree camera angle than it is to cover 270 degrees of the set.

Although for other films such as Saving Private Ryan a lot of handheld moves were done to introduce a feeling of realism that placed the viewer right down on the shoulder of the operator, in the middle of the action.

To inject even more intensity into an already shaky image he used Clairmont Camera’s Image Shaker. This is a device which can be mounted onto the front bars of the camera and vibrates at a controlled level with vertical and horizontal vibration settings which could mimic the effect of the explosions happening around the soldiers.

CONCLUSION

Kaminiski uses whatever technical trick he can think of to create visual metaphors that push the story forward, whether that’s done photochemically, with a filter or by physically shaking up the image.

In the end, the technical solution or piece of equipment itself is less important than the cinematic effect that it produces.

Spielberg and Kaminski’s filmmaking is an intertwined creative partnership which has combined Spielberg’s traditionally cinematic visual direction with Kaminski’s focus on visual metaphors. Sometimes this means perfect golden backlight, but other times a feeling of realism that is far more ugly and true to life is what is required.

Alexa 35 Reaction: Arri's First New Sensor In 12 Years

My first reaction to details about the Alexa 35 prior to the release of the camera.

We’ve been hearing rumours that Arri has been developing a new Super 35 4K camera for years…Well, it seems it’s finally time. A brochure for the new Alexa 35 has leaked that outlines all the features of this new camera.

If you follow the channel you’ll know that I don’t really react to new stories but rather focus on discussing a more general overview of filmmaking topics. However, since I think this new Alexa 35 has the potential to take over the high end cinema camera industry in a similar way that the original Alexa Mini did all those years ago, I’m going to run through and react to some of the key features of this new camera.

BACKGROUND

Before I start, I should probably mention that Arri’s approach to camera development and releasing new cameras is a bit different to some other brands. Brands like Red, for example, are known for putting out cameras as soon as they can and then sorting out any bugs or issues that arise in early testing.

Arri is far more conservative and precise about their releases. They don’t release new gear very often. The Alexa 35 represents Arri's first new sensor that they have developed in 12 years. So, when they do choose to unveil a new piece of gear to the public you can rest assured it has been thoroughly tested and carries a reputation that it will live up to all the specs that they mention.

SUPER 35 4.6K

Arri’s cameras are all developed to fulfil a specific section of the cinema market that relates to its sensor size, specs or physical size of the camera. For example, the Alexa Mini was developed as a Super 35 camera which was small enough to be used on a gimbal. Or the Alexa 65 was developed to provide a 65mm digital sensor size.

The Alexa 35 was developed to be an update of the Alexa Mini, with a Super 35 sensor, a small form factor and the crucial update of recording higher resolutions. Apart from its effect on the images, a big reason this increase in resolution was made was to meet the 4K requirements needed to film Netflix Originals. Previously this was only possible with their cameras that had larger sensors like the Mini LF and was unavailable in the Super 35 format.

As I’ve said in a previous video, Super 35 sensors have a different look and field of view than large format cameras. Since it’s been the standard format throughout cinema history, there is also the largest range of cinema lenses to choose from.

SPECS

So let's run through some key specs. Like their other new cameras, the Alexa 35 can record in ProRes or ARRIRAW. It tops out at 4.6K in Open Gate and can record up to 75 frames per second onto the larger 2TB Codex drives, which goes down to 35 frames on the 1 TB drive.

In regular 4K, 16:9 mode, this frame rate is pushed up to 120 in ARRIRAW. This is a nice upgrade from the Mini LF and will cover most slow motion needs on set, before needing to change to a dedicated slow motion camera like a Phantom.

An impressive feature of this new sensor is that Arri has found an extra one and a half stops of dynamic range in the highlights and another stop in the shadows. This brings the total exposure latitude of the camera to 17 stops.

They also claim that the highlights have a naturalistic, film-like roll off to them. To me, how a cinema camera handles the highlights is one of the most important factors in creating a pleasing filmstock-like look. It’s something that the previous ALEV 3 sensor did well, which I’m sure will continue or be improved upon by this new iteration.

As many DPs tend to push a more naturalistic lighting style these days, I think the increased dynamic range that they claim will help control the light in more radical exteriors and make sure there is detail in the highlights from hot windows in interiors.

More manufacturers these days, such as Sony, have been moving to a dual ISO model that has a standard ISO for regular use and a boosted native ISO for low light situations.

It seems Arri hasn’t gone quite this far but has made a move in the direction of improving the low light performance of the camera with what they are calling an ‘Enhanced Sensitivity Mode’. This can be activated when the EI is set between 2,560 and 6,400. They claim this creates a low noise image in low light and is targeted at filmmakers who want to use available light during night shoots.

When it comes to colour, Arri has developed a new workflow called Reveal colour science, which they claim is a simpler workflow for ARRIRAW post production and leads to higher quality images with accurate life-like colour. They also claim that the Alexa 35 footage will be able to be cut with their existing line of Alexa cameras. While I assume the colour will therefore be fairly similar to the existing Arri look, this is going to be something that will need to be seen once footage starts getting released.

TEXTURES

So I mentioned a new feature of the Alexa 35 that I’m excited about, and that is what they are calling Arri Textures. When digital cameras were originally introduced the common way of working with them was to record as flat a log image as possible, which would then have more room be manipulated in post production by doing things like creating a look, adding artificial film grain, adjusting saturation, these kinds of things.

I think as cinematographers have gotten more used to the digital workflow there has been a bit of a push to go back to the ways of old where the decisions that cinematographers made on set determined the look of the negative.

Some do this by creating a custom LUT before production, which is then added to the transcoded files that are edited with, so that a ‘look’ for the footage is established early on, rather than found later when its handed over to a colourist at the end of the job.

With that said, Arri Textures is a sort of setting plugin that is made in camera that defines the amount and character of the grain in the image, as well as the contrast in the detail or sharpness.

So, cinematographers now have the ability to change the way the camera records an image, much like they would back in the day by selecting different film stocks. I think this is a great idea as a tool as it puts control back into the hands of cinematographers and allows them to make these decisions on set, rather than having to fight for their look in the grade.

ERGONOMICS

With all of these new features and high resolution comes a need for more power in order to get all this done. With that in mind, the Alexa 35 will be a completely 24V powered camera - rather than prior cameras that could run off 12V batteries like V-locks as well as 24V power.

This will be done with their new system of B Mount batteries. I haven’t personally worked with these batteries yet, but one plus I foresee, apart from them providing a higher level of consistent power is that they can be used by camera operators who operate with their hand on the back of the battery.

This has become a popular way to operate, particularly with a rig like an Easyrig. I always found older gold mount or V-mount batteries had a tendency to lose power and shut down the camera from time to time as the contacts shifted when operated. This should no longer be a problem with the B-mount.

In terms of its form factor, I think this new Alexa is a great size, around the same size as the Mini LF - a little larger than the original Mini but small enough to be used for handheld and gimbal work.

The pictures show the addition of a little menu on the operator’s side of the camera, with quick access to basic settings like frames per second, shutter, EI, ND and white balance. It kind of reminds me of old Arri film cameras that came with a little setting display screen on the operator side.

The main reason I think this will be useful is for when the camera needs to be stripped down, for Steadicam, gimbal or drone, and loses its viewfinder which has the main menu access. On the old cameras if you needed to change settings, you’d have to awkwardly plug in the eyepiece, and wait for it to power up before you could do so, or do it through the Arri app on a phone which can be buggy. This new menu should save time in those scenarios.

Other than that they’ve added some extra user buttons which reminds me of the Amira a bit and perhaps is intended for quicker use in documentary situations. The new camera comes with a bunch of re-designed components, with the intention of making it a small but versatile camera that can be built into light or studio setups.

Finally, one criticism I have is that like the Mini LF, the Alexa 35 only has 3 different stops of internal ND, a 0.6, 1.2 and 1.8. I’m surprised they didn’t try to add more stops to compete with Sony’s Venice that has 8 different stops of internal ND filters from 0.3 to 2.4. I know cinematographers who like shooting on the Venice almost entirely for the ease and speed that having all the internal NDs you could need provides.

What A Steadicam Operator Does On Set: Crew Breakdown

In this Crew Breakdown video, let’s take a look at the Steadicam Operator and go over what their role is, what their average day on set looks like, and a couple tips that they use to be the best in their field.

INTRODUCTION

A long time ago, in a world far before low cost gimbals were a thing, there were only a handful of options when it came to moving cameras with a cinematic stability.

You could put a camera on a dolly. You could put a camera on a crane. Which are both great options, but what about if you wanted to do this shot? How do you chase a character over uneven ground, through twists and turns, at a low angle for an extended, stabilised take?

The answer was with a piece of stabilising equipment invented by Garrett Brown, called the Steadicam, that could attach a camera to an operator, giving filmmakers the mobility of a handheld camera combined with a cinematic stability.

This created the new crew position on a film set of Steadicam Operator. So, in this Crew Breakdown video, let’s go over what their role is, what their average day on set looks like, and a couple tips that they use to be the best in their field.

ROLE

“I liked handheld. I did not like the way it looked - then or now. And so what I needed was a way to disconnect the camera from the person.” - Garrett Brown, Steadicam Inventor

Before going over what the role of the Steadicam operator is, let’s take a basic look at how a Steadicam works.

A Steadicam is basically a perfectly balanced, weighted gimbal attached to the camera operator’s body that isolates the camera from the operator’s movement. This allows the camera to be moved around precisely with smooth, stabilised motion.

It can be broken down into three basic sections: the vest, the arm and the sled. The sled includes a flat top stage which the camera sits on and a post which connects the bottom section with a monitor mount and a battery base.

The top stage with camera and the bottom stage with the monitor and the batteries are positioned so the weight of the camera is counterbalanced and even. Like balancing a sword on a finger.

Having two ends which are perfectly balanced both adds weight, and therefore more stability to the rig, and puts the centre of gravity exactly at the operator’s grip, so that they can use their hand to adjust how the camera moves with delicate adjustments.

This hefty weight is supported by a gimbal attached to the post, which attaches to an arm, which then attaches to a vest worn by the operator. The rig’s substantial weight, perfect balance and gimbal allows the operator to manoeuvre the camera around with a floating stability using the motion of their body and deft touches with their grip.

A Steadicam is therefore a great option to move a camera through tight spaces, over uneven terrain, or do flowing, 360 degrees of movement around actors in long takes.

It’s generally seen as providing more organic motion and the ability to do hard stops with precision better than 3-axis gimbals - which have a drifting motion to them before they come to a resting stop.

The role of the Steadicam operator is an interesting one, as it requires both a deep technical knowledge and proficiency as well as a creative flair and theoretical knowledge on how to move the camera and frame shots to tell a story.

Sometimes, but not always, a Steadicam op will also work as the primary camera operator (or the B-camera operator), operating shots from a tripod head, wheels and performing any Steadicam shots that are required.

Their job includes helping to build and balance the camera on the Steadicam rig, discussing a shot with the DP and director and then executing it - often adjusting between takes until the perfect take is in the can.

AVERAGE DAY ON SET

Before the shoot begins, the Steadicam operator will show up to the gear check at the rental house where the camera team tests and assembles the gear. As different jobs will use different cameras and lenses, which come in different weights and sizes, it’s crucial that the camera is properly built and balanced during testing.

Nothing would be worse than building the camera on the day, without a gear check, only to realise that the lens is too front heavy to balance on the Steadicam.

On the day of shooting, the operator will grab a shooting schedule or communicate with the 1st AD to determine what Steadicam shots need to be done and therefore when the camera needs to be built for Steadicam. Sometimes most of the day can be spent doing Steadicam, but usually it will only be reserved for a few shots, in different scenes or setups, spread throughout the day.

If there is a particularly tricky shot, or a choreographed long take that has been pre-planned, the operator may meet with the DP during pre-production, prior to shooting, and walk through the shot to work out how best to pull it off.

When it comes time for Steadicam, the first thing to be done is to build the camera. This is done by the 1st AC or focus puller who will strip the camera of excess weight, configure the necessary accessories, such as the transmitter or focus motors in the same place as they did during the gear check and attach the Steadicam’s sliding base plate to the bottom of the camera.

It is then handed off to the operator who will slide the camera onto the top stage and test it to make sure it is properly balanced on the gimbal. They’ll then throw on the vest, go up with the camera and run through a rehearsal or a rough blocking with the director, actors and DP to work out the movement.

When they’re ready they’ll go for a take. The director and DP will watch a feed of the image transmitted on a monitor and give feedback on things like the speed of the motion, the framing or suggesting a new movement.

The camera team will often hand a wireless iris control to the DP, that they can then use to change the aperture on the lens remotely if there are any changes in light.

Between takes when the camera isn’t needed, the operator will take the weight of the Steadicam off by placing it on a stand.

This is the core of their job. However, since the requirements of different shots can vary hugely depending on the situation, each shot may offer a different challenge when it comes to operating. Sometimes this may be the physical challenge of operating a heavy setup, other times it may be a matter of synchronising the timing of the movement with the actor and focus puller or the shot itself may require particularly nimble operation.

The Steadicam operator has to be able to cooly and calmly adapt to each situation to provide the creative team with the kind of shot that they imagine under the pressures of a time limit.

TIPS

To become a Steadicam Operator you can’t just show up on set and learn as you go. The reason it is such a niche profession is that it takes lots of training, knowledge, practice and experience to be hired for high end film jobs.

It’s also expensive.

Typically, Steadicam operators buy their own Steadicam, which is a pricey piece of gear, attend Steadicam workshops where they are trained how to operate it, and are then able to rent out their expertise and their rig out to productions on jobs.

In recent years Arri also introduced the Trinity, which is similar to a Steadicam with a 3-axis camera stabiliser that allows the camera to move on the roll axis, and self balancing features which allows the camera to be moved from low mode to high mode during a shot and the post to be extended for extra reach.

With a traditional Steadicam, operators need to decide before a shot begins whether to shoot in the more common high-mode, or if the camera needs to be close to the ground with the post flipped around and used in low-mode.

Another option sometimes used is to hard mount the arm of the Steadicam on a moving vehicle. The operator then sits next to the rig to operate the camera without having to hold the full weight of it.

An early example of this was worked out by Garrett Brown on The Shining for the famous hallway tracking shots. They hard mounted the Steadicam arm to a wheelchair which could then be pushed through the hotel corridors in either high mode, or inches from the ground in low mode.

Since a Steadicam rig with a cinema camera is extremely heavy, operators try to minimise the amount of time that they carry the rig in order to save their stamina for shooting. Any time the camera isn’t going for a take they’ll use a stand to rest the rig, or have a grip standing close by so that they can hand the post off to them as soon as cut is called.

Communicating with the AD to make sure that the camera only goes up at the last possible moment, and isn’t waiting there for ages while make-up does final checks and the director stands in to give notes, is another good way of minimising time holding the rig.

Since the camera is set to balance perfectly, if there are big gusts of wind the camera can be shaken and experience turbulence. Therefore it’s good to make sure the grip department is carrying a ‘wind block’. This is a sheet of mesh material attached to a frame that is held by grips between the source of the wind and the camera in order to minimise turbulence.

Another crew member that the Steadicam operator needs to communicate with is the focus puller. Since on an average shoot day the camera will usually need to alternate between studio builds and Steadicam builds the 1st AC and the Steadicam operator should come up with the easiest possible method to change between these configurations that’ll save the production the most time. Because, on a film set more than anywhere else, time is money.

How The French New Wave Changed Filmmaking Forever

Out of all of the film movements I’d say one of the most influential of them was the French New Wave. In this video I’ll outline four things from this film movement that are still present in how movies are made and thought about today, which were responsible for altering the course of filmmaking forever.

INTRO

“He immediately talked about, kind of, the French New Wave portrait of youth.” - Greta Gerwig

“The beginning of Jules and Jim, the first three or four minutes influence the style of Goodfellas and Casino and Wolf of Wall Street and so many.” - Martin Scorsese

“Godard was so influential to me at the beginning of my aesthetic as a director, of, like, wanting to be a director.” - Quentin Tarantino

Throughout the decades, there have been many defining film movements in cinema. Some have had a longer lasting impact than others. Out of all of them I’d say one of the most influential of these movements was the French New Wave, which took place from the late 50s to the late 60s. Its impact can still be seen to this day.

During this time various directors emerged who made films that could broadly be classified by their similar philosophy and approach towards experimentation and style.

Many of these directors began their careers as film critics and cinephiles who wrote for the magazine Cahiers du Cinéma where they rejected mainstream cinema and came up with a sort of film manifesto that encouraged experimentation and innovation.

In this video I’ll outline four things from this film movement that are still present in how movies are made and thought about today, which were responsible for altering the course of filmmaking forever.

AUTEUR THEORY

“An Inquisition-like regime ruled over French cinema. Everything was compartmentalised. This movie was made as a reaction against everything that wasn’t done. It was almost pathological or systematic. ‘A wide-angle lens isn’t used for a close up? Then let’s do it.’ ‘A handheld camera isn’t used for tracking shots? Then let’s do it.’” - Jean Luc-Godard

In 1954 director Francois Truffaut wrote an article for Cahiers du Cinéma called ‘A Certain Tendency of the French Cinema’, wherein he described his dissatisfaction of the adaptation and filming of safe literary works in a traditional, unimaginative way.

Up until then movies were largely credited to the actors who starred in them, or to the studios and producers involved in their funding and creation.

Instead, the cinema of the French New Wave put forward the idea that the real ‘author’ or ‘auteur’ of a movie should be the director. They should be the primary creative driving force behind each project by creating a visual style or aesthetic specific to them. Their themes, tone, or overall feeling from their films should also be consistent and identifiable across their overall body of work.

If you could glance at a film and immediately tell who the director behind it was - that was a sign it was created by an auteur.

A film by Quentin Tarantino will have ensemble casts, non-linear storylines, chapter divides, mixed genre conventions and pay homage to the history of cinema.

A film by Wes Anderson will have fast-paced comedy, childhood loss, symmetrical compositions, consistent colour palettes and highly stylised art direction.

This idea was revolutionary as it encouraged directors to tell stories through their own distinctive voice, rather than acting as craftsmen that followed the same rules and chiselled out each film the same way for a studio.

All it takes is watching a few trailers or the credits in a film to tell that auteur theory is still alive and well. Many movies use the name of the director as a selling point, even more so than the actors in some cases.

If we turn to short form filmmaking, a huge number of directors of commercials or music videos get hired by clients and agencies because they want their film told in a specific style associated with that director.

You hire The Blaze to direct if you want a character-focused, wildly energetic, passionate, personal journey told with a fluidity of movement.You hire Romain Gavras to direct if you want a carefully coordinated, composed, concept driven set piece.

But this French New Wave idea of the director as an auteur is just the first thing that had an undeniable impact on how cinema today is created.

LOW BUDGET

“I really like Band Apart. In particular it really kinda grabbed me. But one of the things that really grabbed me was that I felt I almost could have done that. I could’ve attached a camera to the back of a convertible and drive around Venice boulevard if I wanted to.” - Quentin Tarantino

In their more financially risky pursuit to break free from the constraints of the traditional mould of French cinema and create their own inventive styles as auteurs, many French New Wave directors had to work within a low budget lane.

This was also influenced by the financial restraints of post-World War Two France.

Rather than seeing it as a disadvantage, a lot of the movies that came out of this period used their lack of resources to break conventional rules and form their own style - which we’ll get into more a bit later.

They took some cues from the Italian Neorealist movement that preceded it, which cut costs by shooting on location and working with non-professional actors in rural areas.

Likewise, many French New Wave films worked on location, with a bare bones approach to lighting and homemade, DIY camera rigs. This allowed them to work quickly, unencumbered by large crews and introduced a more on-the-ground aesthetic to the filmmaking.

This further democratised filmmaking and made it more accessible than ever before. It showed that big studios were not always needed to produce great cinema.

This democratisation of filmmaking expanded further throughout the years, until it exploded even more with the introduction of low budget digital cinema cameras.

There’s a reason that many low budget indie films today still use French New Wave films from this period as a primary reference and inspiration for, not only what is possible to achieve with limited resources, but also the kind of look and style that comes with it.

VISUAL STYLE

“All these films had been very different of what had been French cinema. What was in common was to use a lot of natural light, sometimes use non actors, natural sets, a sort of speed in the inspiration and the work. That is what was in common.” - Agnes Varda

What emerged from this rejection of cinematic tradition in a low budget environment were a burst of films that broke existing filmmaking ‘rules’ and had a vigorously experimental style.

Part of this was informed by a documentary-esque approach to cinematography that free-ed the actors up to move and improvise. Like documentaries, these films were largely shot at real locations, relied on using mostly natural light (which allowed them to shoot 360 degrees in a space), using a reactive, handheld camera and sometimes employed non-professional actors who they’d get to improvise dialogue, blocking and actions.

All this went against the more formal conventions that were previously expected of traditional studio films that were shot in studio sets, off a rigid dolly, with perfect, artificial lighting and precise blocking of a pre-approved screenplay.

In this way the French New Wave paved a path that made it OK for future filmmakers to work in a rougher, more naturalistic style and broke down the very notion that cinematography needs to conform to specific rules.

EXPERIMENTATION

“I think a lot of it has to do with the relentlessness of the voice over and the rapid speech and also the pace of the music under it.1:23 “It feels like there’s a sense of freedom. Anything could happen at any moment…Narrative is completely fractured I think.” - Martin Scorsese

French New Wave directors saw exciting possibilities for using film as a medium - more like painters or novelists did - which could not only be used to tell stories but also to translate their thoughts or ideas by experimenting with form and style.

Much of this was done in the edit.

Whereas older films may have used a traditional, linear story, various scenes and exposition to unpack characters, films like Jules and Jim used voice over, fast paced music and snappy editing to immediately introduce characters and their relationships in a more fractured way that compressed time into a montage.

Directors like Godard broke down the medium even more into a self conscious, post modern vision by having characters literally break the fourth wall and talk directly into the camera, face to face with the audience.

Instead of attempting to suspend disbelief, Godard made his audience very aware that what they were watching was something constructed by an artist.

Breathless also went against a universal rule of cinema and used jump cuts, a technique which cuts forward in time using the same shot, without changing the angle or shot size. The effect is an abrasive ‘jump’ forward in time.

This technique influenced future filmmakers by tearing down the idea that the rules of cinema should be strictly followed. This post modernism that was pushed by the French New Wave has now seeped into every kind of contemporary visual art - including how many YouTube videos are now edited.

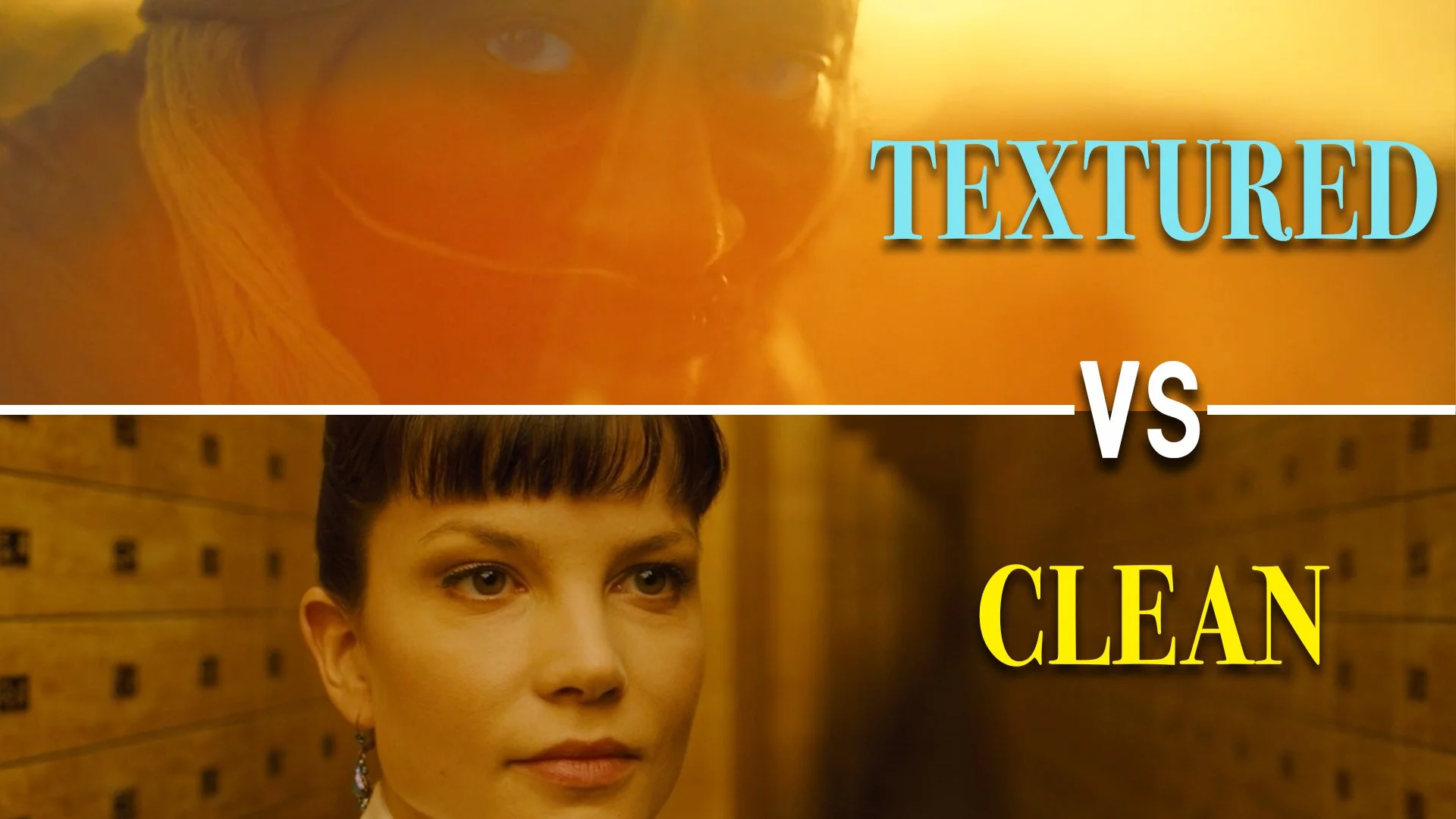

Do Cinematographers Like Lens Flares? Textured vs Clean Images Explained

When it comes to the question of whether clean or textured images should be favoured, cinematographers are generally split into two different camps.

INTRO

“I can’t stand flares. I find any artefact that is on the surface of the image a distraction for me. The audience or I’m then aware that I’m looking at something that is being recorded with a camera.” - Roger Deakins, Cinematographer

“If the light shone in the lens and flared the lens that was considered a mistake.I feel particularly involved in making mistakes feel acceptable by using them. Not by mistakes or anything but by endeavour.” - Conrad Hall, Cinematographer

When it comes to the question of whether clean or textured images should be favoured, cinematographers are generally split into two different camps. Some see their goal as being to create the most pristine, cinematically perfect visuals possible, while others like to degrade the image and break it down with light and camera tricks.

Before we discuss the pros and cons of clean and textured images, we need to understand some of the techniques used by cinematographers that affect the quality of how an image is captured. Then I’ll get into the case that can be made for clean images and the case that can be made for textured images and see which side of the fence you land on in the debate.

WHAT MAKES AN IMAGE CLEAN OR TEXTURED

When cinematographers talk about shooting something that looks clean, they are referring to an image which has the subject in sharp focus, which is devoid from any excess optical aberrations, video noise, grain or softening of the highlights or bright parts in the frame. Some cinematographers however like to introduce different kinds of textures by deliberately ‘messing it up’.

The easiest identifiable optical imperfection is the lens flare. This happens when hard light directly enters the open glass section at the front of a lens and bounces around inside the barrel of the lens off of the different pieces of glass, which are called elements.

So to get a lens flare, cinematographers use a backlight placed directly behind a subject or at an angle that is shined straight at the lens. A common way of doing this is to use the sun as a backlight and point the camera directly at the sun.

In the past, flares were often seen as undesirable so a few tools were introduced to get rid of them. To prevent a flare you need to block the path of any hard light that hits the lens directly. A mattebox is used not only to hold filters but also to block or flag light from hitting the front element. A top flap and sides can be added to a mattebox to cut light, as can a hard matte - which clips inside the mattebox and comes in different sizes which can be swapped out depending on how wide the lens is.

If a shot is stationary and the camera doesn’t move, the lighting team can also erect a black flag on a stand to cut light from reaching the lens.

On the other hand, a trick some use to artificially introduce a flare when there isn’t a strong backlight is to take a torch or a small sourcy light like a dedo and hit the lens with it from just out of shot.

Different kinds of lenses produce different kinds of flares, which are determined by the shape of their glass elements, the number of blades that make up the aperture at the back of the lens and the way in which the glass is coated. Standard, spherical lenses have curved, circular elements that produce round flares that expand or contract as the light source changes its angle.

Anamorphic lenses are made up of regular spherical glass with an added section of concave glass that vertically squeezes the image. It is then de-squeezed to get a widescreen aspect ratio.

Because of this, anamorphic lenses produce a horizontal flare that streaks across the frame. The Panavision C-Series of anamorphic lenses are famous for producing a blue anamorphic lens streak which is associated with many high end Hollywood films.

The glass elements inside a lens have different types of coatings. Modern coatings are used to decrease artefacts and limit flooding the image with a haze when the lens flares.

As technology has improved these coatings have gotten progressively better at this and therefore more modern lenses produce a ‘cleaner’ image. One way that cinematographers who like optical texture get around this is to use vintage lenses that have older coatings that don’t limit flares as much or bloom or create a subtle angelic haze around the highlights. You even get uncoated lenses for those that really want to push that vintage look.

Another option to soften up an image a bit is to use diffusion filters. These are pieces of glass that are placed inside a mattebox and create various softening effects, such as decreasing the sharpness of the image, making the highlights bloom and softening skin tones.

Some examples of these filters include Black Pro-Mists, Glimmer Glass, Pearlescents, Black Satins, Soft FX filters - the list goes on. They come in different strengths, with lower values, such as an eighth providing a subtle softness and higher values providing a heavy diffusion.

Some cinematographers even go more extreme by using their finger to deliberately smudge or dirty up the front of a filter.

A final way of introducing texture to an image is with grain. This can be done either by shooting on a more sensitive film stock, like 500ASA and push processing it, by increasing the ISO or EI on the camera, or by adding a film grain effect during the colour grade in post production.

THE CASE FOR TEXTURED IMAGES

“What lenses? Should it be sharp? Should it have flaws? Should it have interesting flares? I always try to be open to everything.” - Linus Sandgren, Cinematographer

Now that I’ve listed all the ways that an image can be messed up by cinematographers, let’s go over some reasons why anyone would actually want to do this in the first place.

Up until about the 1960s or 1970s, the idea of intentionally degrading how an image was captured wasn’t really prevalent. However, movements like the French New Wave or New Hollywood rebelled against capturing a perfect representation of each story and intentionally used things like flares to do this.

Producing optical mistakes from a more on the ground camera created an authenticity and grittiness to the images in a similar way that many documentaries did.

In different contexts, optical aberrations, like lens flares, have been used to introduce different tonal or atmospheric ideas. For example, Conrad Hall went against the Hollywood conventions of the time and embraced flares on Cool Hand Luke to create a sense of heat from the sun and inject a physical warmth into the image that reflected the setting of the story.

Some filmmakers like deliberately using lower gauge film such as 16mm or even 8mm to produce a noisy, textured image. Often this is perceived as feeling more organic and a good fit for rougher, handheld films.

Textured images with a shallow depth of field also feel a bit dreamier, and can therefore be a good tool for representing more experimental moments in a story or to portray a moment that happened in the past as a memory.

Since the digital revolution, many DPs have taken to using diffusion filters and vintage lenses on modern digital cinema cameras - to balance out the image so that it doesn’t feel overly sharp.

Degrading the image of the Alexa by shooting at a higher EI, like 1,600, shooting on lenses from the 1970s, or using an ⅛ or a ¼ Black Pro Mist filter, are all ways of trying to get the more organic texture that naturally happened when shooting on film back into the image.

THE CASE FOR CLEAN IMAGES

“Digital cameras were able to give us a beautiful, very clean, immersive image that we were very keen on…3:13 It almost translates 100% what you are feeling when you are in the location.” - Emmanuel Lubezki, Cinematographer

On the flipside, some DPs seek a supremely clean look that pairs sharp, modern glass with high resolution digital cameras.

One reason for this is that clean images better transport the audience directly into the real world, and present images in the same way that our eyes naturally see things. Clean images are regularly paired with a vision that needs to feel realistic.

These cinematographers see any excess grain or aberrations as a distraction that pulls an audience out of a story and makes them aware that what they are seeing isn’t reality and is rather a visual construction.

When light flares across a lens it’s an indication that the image was captured by a camera and may disrupt the illusion of reality.

Sometimes filmmakers also want to lean into a clean, sharp, digital look for the story. It’s like choosing to observe the world directly, in sharp focus, rather than through a hazy, fogged up window.

Cinematography Style: Ari Wegner

Ari Wegner's cinematography isn't tied down to one particular look, and is rather based on a careful and deeply thought out visual style that uses informed creative decisions to present a look that is tailor made for each individual story or script.

INTRODUCTION

“I think that’s the question for any film. How do you get the energy of the script or the idea into it visually? Every film is different and every scene is different but if you know what your aspiration is to do that then you can think of some ideas of how to achieve that.”

In this series I’ve talked before about how some cinematographers like to create a look that is fairly consistent across much of their work, while others distance themselves from one style and mould the form of their cinematography depending on the script or director that they are working with.

Ari Wegner very much falls into the latter category. The films that she shoots are never tied down to one particular look, and are rather based on a careful and deeply thought out visual style that uses informed creative decisions to present a look that is tailor made for each individual story or script.

In this video I’ll unpack this further by diving into the philosophy behind her photography and showing some of the gear that she uses to execute those ideas.

BACKGROUND

Growing up in Melbourne around her parents who were both artistically inclined filled her with an appreciation for the arts and creative thought from an early age.

Her desire to work in film was sparked by her media teacher exposing her to short films, notably one by Jane Campion. She then changed her focus from photography to cinematography.

After graduating from film school she spent years shooting local independent films and documentaries, before breaking out by photographing Lady Macbeth, which screened at numerous festivals.

Some of the directors she’s worked with include: Janicza Bravo, Jane Campion and Justin Kurzel.

PHILOSOPHY